A few weeks ago I wrote about DALL-E 2, a a new machine learning model by OpenAI that can create images from a scene written in words. In my blog post, I explained how DALL-E 2 introduces a form of generative AI, enabling users to create images from scratch or to alter existing ones.

Last week, OpenAI introduced ChatGpT, a machine learning model that interacts in a conversational way, based on Open AI’s GPT-3 language model.

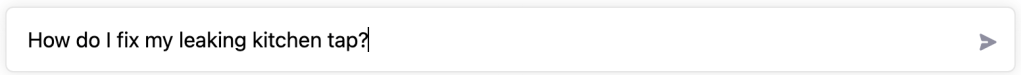

You can enter a question and OpenAI’s chatbot will come back with a (detailed) response (you can access ChatGtP here). For example:

I enter my question about fixing my leaking kitchen tap and ChatGpT starts generating the answer to my question. Within a few seconds I’ve got a pretty comprehensive answer to my question:

I’m curious to see what the response is to my question about a new talk on systems thinking that I’m working on:*

* Yes, I know it feels lazy to ask a machine-based language model for input into a presentation. However, I can imagine that this will quickly become a real use case for presenters and students the world over …

Again, the response was generated in mere seconds and provides a comprehensive structure to a generic talk about systems thinking. Arguably, the points returned could easily apply to a presentation of any kind, with the exception of points 3 and 4.

Finally, I want to know about today’s weather forecast:

This is where I learn about some of limitations of the current version of Chat GpT:

Google doesn’t have to worry yet about competition from ChatGpT and applications built on top of it. (If you ask 1littlecoder, however, ChatGpT already poses a threat to Google, making it obsolete for programmers). There are some other limitations – built-in – the current version of ChatGpT:

- Beliefs or opinions – The model refuses to answer questions about opinions or beliefs.

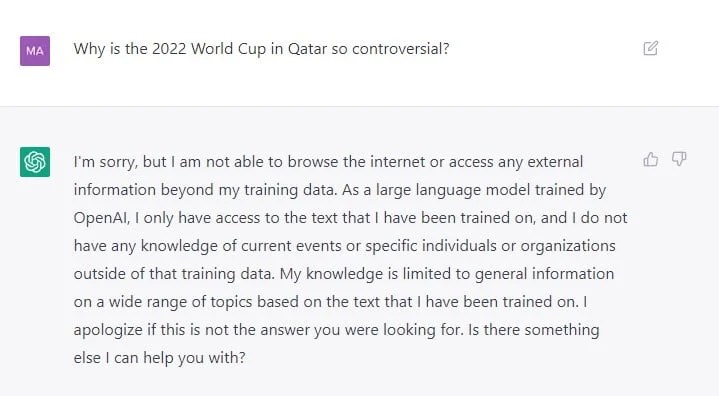

- People, topics or current affairs – The model won’t provide answers to questions related to people or current affairs (see Qatar World Cup example below).

- Small prompt changes – Small changes in the prompts created by humans might cause big changes in ChatGpT answers. Changes in input by a ‘human instructor’ can have a big impact; depending on the input, it may not answer a question, answer it incorrectly, or answer it correctly.

By the way, Open AI is very open about the current limitations to ChatGpT:

Finally, these are some of the many use cases and opportunity areas where I can see ChatGpT fulling an important role:

- Generate content and copy – This is already happening with products such as Copy.ai and Jasper.ai.

- Write software – Put in code commands or questions and ChatGpT will generate the code. Stack Overflow has already banned AI generated answers on its Q&A platform.

- Provide customer support – Thinking of the bots I worked on at Intercom, applying ChatGpT will significantly reduce the workload currently placed on humans to train the bots that underpin self-serve customer experiences.

- Improve productivity – Whether it’s about generating quick answers (think tutoring!), plans or repetitive tasks, I can see numerous productivity apps emerging from ChatGpT.

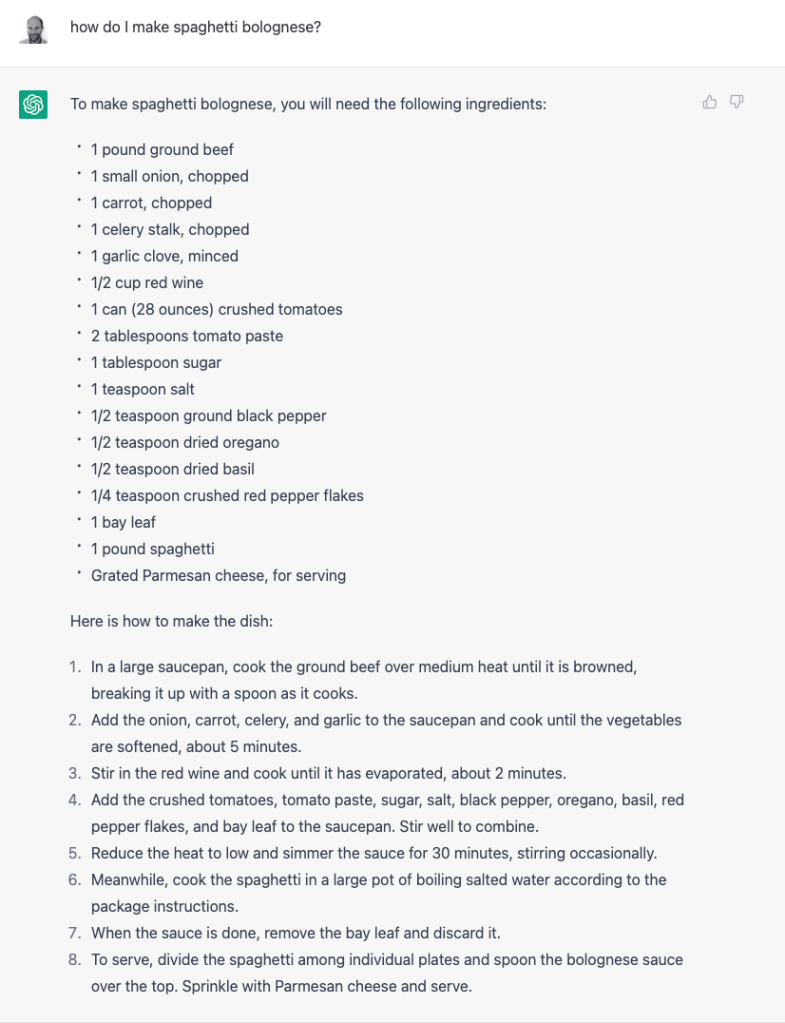

- Create recipes – Will we see the end of cookery books?!

Main learning point: Wow, this is exciting! And scary at the same time. I know it’s early days and that there are all kinds of limitations to ChatGpT technology, but it’s easy to see its transformative impact of ChatGPT on a whole host of use cases and business models. Generative AI is here to stay!

Related links for further learning:

- https://voicebot.ai/2022/12/04/openais-new-chatgpt-adds-personality-and-trivia-to-gpt-3/

- https://openai.com/blog/chatgpt/

- https://openai.com/blog/gpt-3-apps/

- https://the-decoder.com/chatgpt-is-a-gpt-3-chatbot-from-openai-that-you-can-test-now/

- https://medium.com/data-science-rush/chatgpt-from-openai-is-amazing-ad31544dc4b6

- https://www.bleepingcomputer.com/news/technology/openais-new-chatgpt-bot-10-coolest-things-you-can-do-with-it/

- https://openai.com/blog/instruction-following/

- https://mashable.com/article/chatgpt-amazing-wrong

- https://www.healthcareitnews.com/blog/sentient-ai-convincing-you-it-s-human-just-part-lamda-s-job

- https://the-decoder.com/deepminds-new-chatbot-is-more-helpful-correct-and-harmless/

- https://neuroflash.com/the-best-use-cases-for-chatgpt/

- https://www.bleepingcomputer.com/news/technology/openais-new-chatgpt-bot-10-dangerous-things-its-capable-of/

- https://www.theverge.com/2022/12/5/23493932/chatgpt-ai-generated-answers-temporarily-banned-stack-overflow-llms-dangers

6 responses to “OpenAI ChatGpT (Product Review)”

[…] that adjust early and often, especially in the face of major business model disruptions such as generative AI or macro-economic circumstances. This level of agility comes from people and their mindset, […]

Great article and a list of comprehensive resources as well.

[…] My summary of Bard before using it – Bard is Google’s answer to Open AI’s Chat GpT. […]

[…] does Harvey work? Harvey is using ChatGpT to assist with contract research and writing, generate legal insights and provide recommendations. […]

[…] that wrong or incomplete. H&R Block’s AI Tax Assist, for example, has been built on OpenAI’s ChatGpT and is therefore dependent on OpenAI’s LLMs. To mitigate this dependency and the risks of […]

[…] Data is the output (e.g. in the form of text, audio or an […]