What are AI guardrails?

Guardrails identify and remove inappropriate or inaccurate output generated by LLMs. They can also pick up on risky prompts to reduce the risk of leaking sensitive information, sharing personally identifiable information and providing non-compliant advice. Guardrails shape the LLM’s behaviour by setting specific rules that apply to any data input into the system.

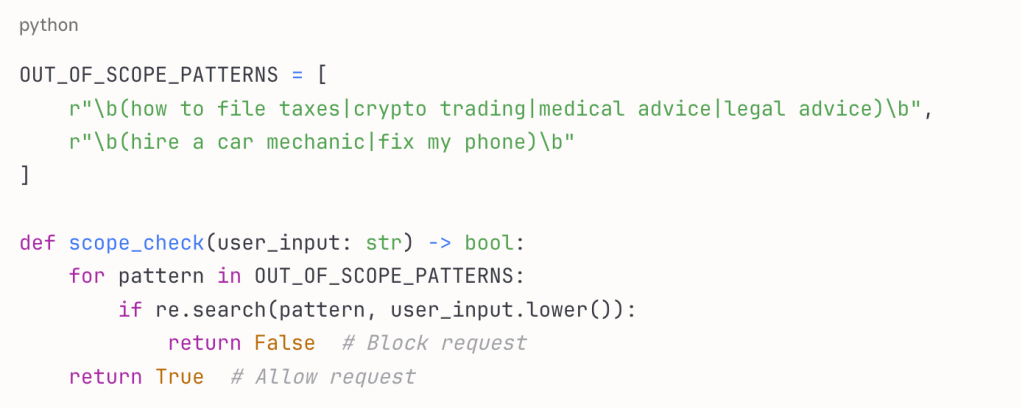

A customer service chatbot for an airline, for example, should be prevented from providing answers to queries about topics outside its expertise like tax advice, medical recommendations or car repairs.

This pre-input guardrail uses pattern matching to identify and block out-of-scope requests before they reach the LLM, saving processing costs and preventing potentially harmful responses.

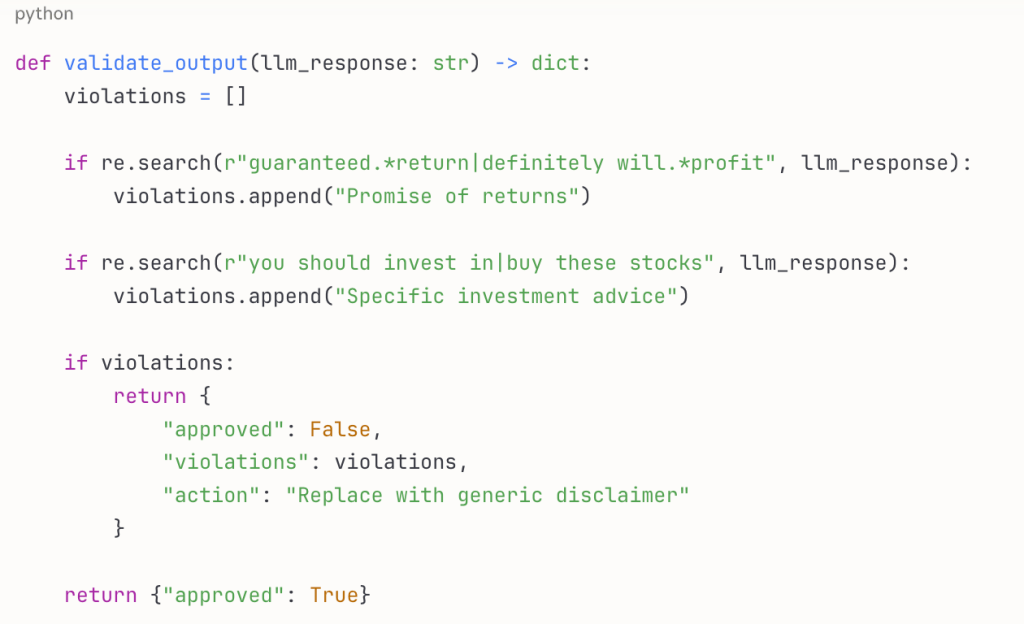

Guardrails also monitor and evaluate LLM output, tracking when guardrails were triggered and under what conditions. Each response is analysed for potential harmful outputs and an audit trail is created showing the timestamp of each interaction, direction (input/output), threats detected, content preview and policy applied.

Teams can thus review flagged conversations, identify patterns in harmful outputs and continuously refine their guardrails based on real user interactions. A financial advice chatbot, for example, can apply post-output validation to ensure responses don’t include competitor references, specific investment recommendations or personalised tax advice without appropriate disclaimers.

How should PMs think about AI guardrails?

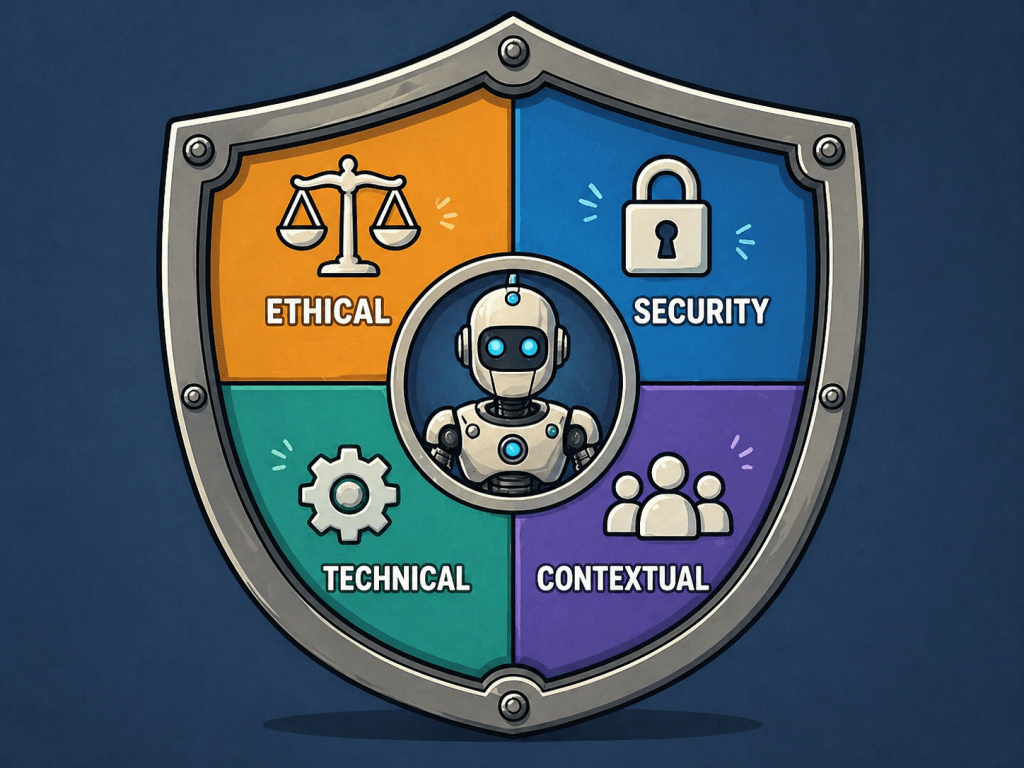

Just like evals are becoming part of the product manager’s toolkit, product managers and cross-functional teams need to consider guardrails to ensure AI systems operate safely, ethically and within specific boundaries. There are three main types of AI guardrails: appropriateness guardrails (protecting against harmful content), hallucination guardrails (protecting against false or misleading information) and compliance guardrails (ensuring compliance with relevant legal and ethical frameworks like GDPR, SOC 2 and internal policies).

PMs working on AI-based products will be involved in defining policies to feed into the guardrails, technical implementation (validators and toxic language filters) and continuous monitoring in test and production environments.

I’m no expert on the technical implementation of guardrails, but open source tools such as Guardrails AI, Datadog, GuardReasoner and Coralogix offer out-of-the-box frameworks and tools to implement guardrails.

Main learning point: AI guardrails aren’t just a technical implementation detail but a fundamental product consideration that impacts user experience, manages risk and ensures your AI system delivers value within acceptable boundaries.

Related links for further learning:

- GraphGuard OS: Building Knowledge Graph–Based AI Guardrails That Can’t Be Reasoned Around by Akash Goyal

- AI Guardrails: Why I Dived In — and Why You Should Too by Kosi Ashara

- Building Trainline’s AI Travel Assistant: How a 25-Year-Old Company Went Agentic by Teresa Torres

- Microsoft 365 Copilot Safety System: AI Answers You Can Trust by Evan Zaleschuk

- What Are AI Guardrails? Building Safe, Compliant, and Responsible AI Systems by Dilan Perera